Welcome back to another episode of "Continuous Improvement." I'm your host, Victor, and today we're going to dive into the world of Apache Kafka and its integration with MongoDB.

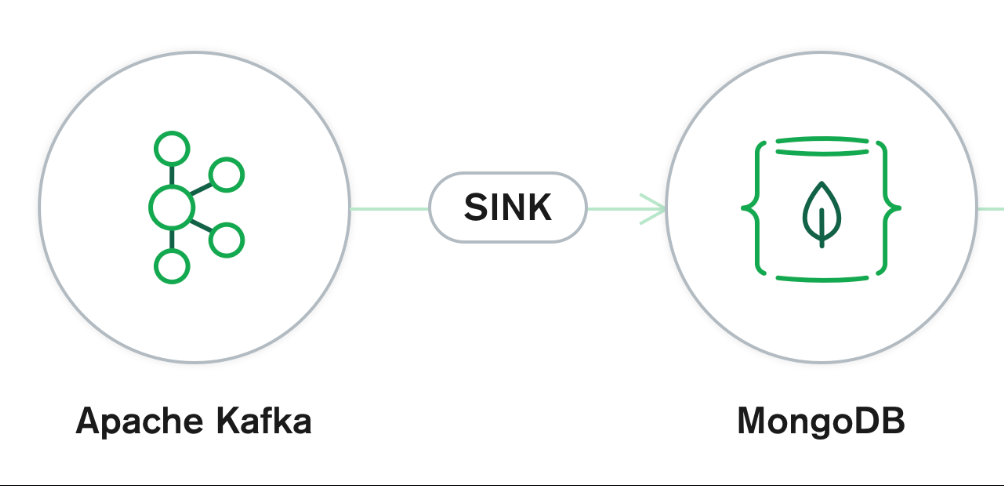

Apache Kafka is an open-source publish/subscribe messaging system that allows seamless communication between different data sources. One component of Kafka, known as Kafka Connect, provides a solution for connecting Kafka with various datastores, including MongoDB. In today's episode, we'll focus on using MongoDB as a data lake and explore the MongoDB Kafka sink connector.

But before we get into that, let's start by setting up our Kafka environment. First, you'll need to download the latest Kafka version from the official Apache Kafka website. Once downloaded, extract the files and navigate to the Kafka directory.

To start our Kafka environment, we need to run the ZooKeeper service. Open a terminal window, navigate to the Kafka directory, and execute the following command:

bin/zookeeper-server-start.sh config/zookeeper.properties

Now that the ZooKeeper service is up and running, let's start the Kafka broker service. Open another terminal window, navigate to the Kafka directory, and execute the following command:

bin/kafka-server-start.sh config/server.properties

Excellent! We now have a basic Kafka environment up and running. Now let's install the MongoDB Kafka sink connector, which allows us to write data from Kafka to MongoDB.

First, let's download the required JAR file for the MongoDB Kafka Connector. Visit the official MongoDB Kafka Connector repository and download the JAR file. Once downloaded, navigate to the /libs directory within your Kafka installation.

Now, let's update the config/connect-standalone.properties file to include the plugin's path. Open the file, scroll to the bottom, and update the plugin.path property to point to the downloaded JAR file.

With the plugin installed, it's time to create the configuration properties for our MongoDB sink connector. In the /config folder, create a file named MongoSinkConnector.properties. This file will contain the necessary properties for our MongoDB sink connector to function.

Now, let's add the required properties for the message types. We'll use the JSON converter for both the key and value and disable schemas.

Onto the specific MongoDB sink connector configuration. Here, we define the connection URL, the database we want to write to, the collection within the database, and the change data capture handler.

Great! Now let's create another configuration file for the MongoDB source connector. Create a file in the /config folder named MongoSourceConnector.properties. This file will contain the necessary properties for our MongoDB source connector.

In the MongoSourceConnector.properties file, we need to specify the connection URI of our MongoDB instance, the database we'll be reading from, and the collection within that database.

Now that we have our Kafka environment set up and the MongoDB Kafka connectors configured, it's time to install MongoDB itself. We'll go through the installation steps quickly, but keep in mind that you may need to adjust some commands based on your operating system.

First, we'll need to download the MongoDB public GPG key and add it to our system. This step ensures the authenticity of the MongoDB packages.

Next, we create the MongoDB source list, which specifies the MongoDB packages' download location.

After updating the package database with the MongoDB source list, we can finally install the MongoDB packages.

In case you encounter any errors related to unmet dependencies during the installation, we provided some commands to address those issues.

Finally, let's verify the status of our MongoDB installation to ensure everything is running smoothly. Simply run the command and check the output to see if MongoDB has started successfully.

Perfect! Now that we have our Kafka environment set up, the MongoDB Kafka connectors configured, and MongoDB installed, we're ready to start the Kafka Connect service.

To start Kafka Connect, open a terminal window, navigate to the Kafka directory, and execute the following command:

bin/connect-standalone.sh config/connect-standalone.properties config/MongoSourceConnector.properties config/MongoSinkConnector.properties

With Kafka Connect up and running, let's write some data to our Kafka topic. Open a new terminal window, navigate to the Kafka directory, and execute the command provided.

Fantastic! We've successfully written data to our Kafka topic. Now, let's ensure that our MongoDB sink connector is properly processing the data and writing it to the MongoDB collection.

To verify this, we'll insert a document into the MongoDB collection from which our source connector reads data. Execute the MongoDB shell commands provided, and the document will be inserted.

Finally, let's check the topicData collection in MongoDB to confirm that our connectors have successfully processed the change.

Congratulations! You've successfully integrated Apache Kafka with MongoDB, allowing seamless data transfer between the two systems. For more information and further details, visit the MongoDB Kafka Connector documentation linked in the show notes.

That's it for today's episode of "Continuous Improvement." I hope you found this exploration of Apache Kafka and MongoDB valuable. Stay tuned for more episodes where we uncover the best practices and tools for continuous improvement in the tech world. Until then, keep improving!