Microsoft Fabric - Revolutionizing Data Analytics in the AI Era

In today's fast-paced digital world, data is the lifeblood of AI, and the landscape of data and AI tools is vast, with offerings like Hadoop, MapReduce, Spark, and more. As the Chief Information Officer, the last thing you want is to become the Chief Integration Officer, constantly juggling multiple tools and systems. Enter Microsoft Fabric, a game-changing solution designed to simplify and unify data analytics for the era of AI.

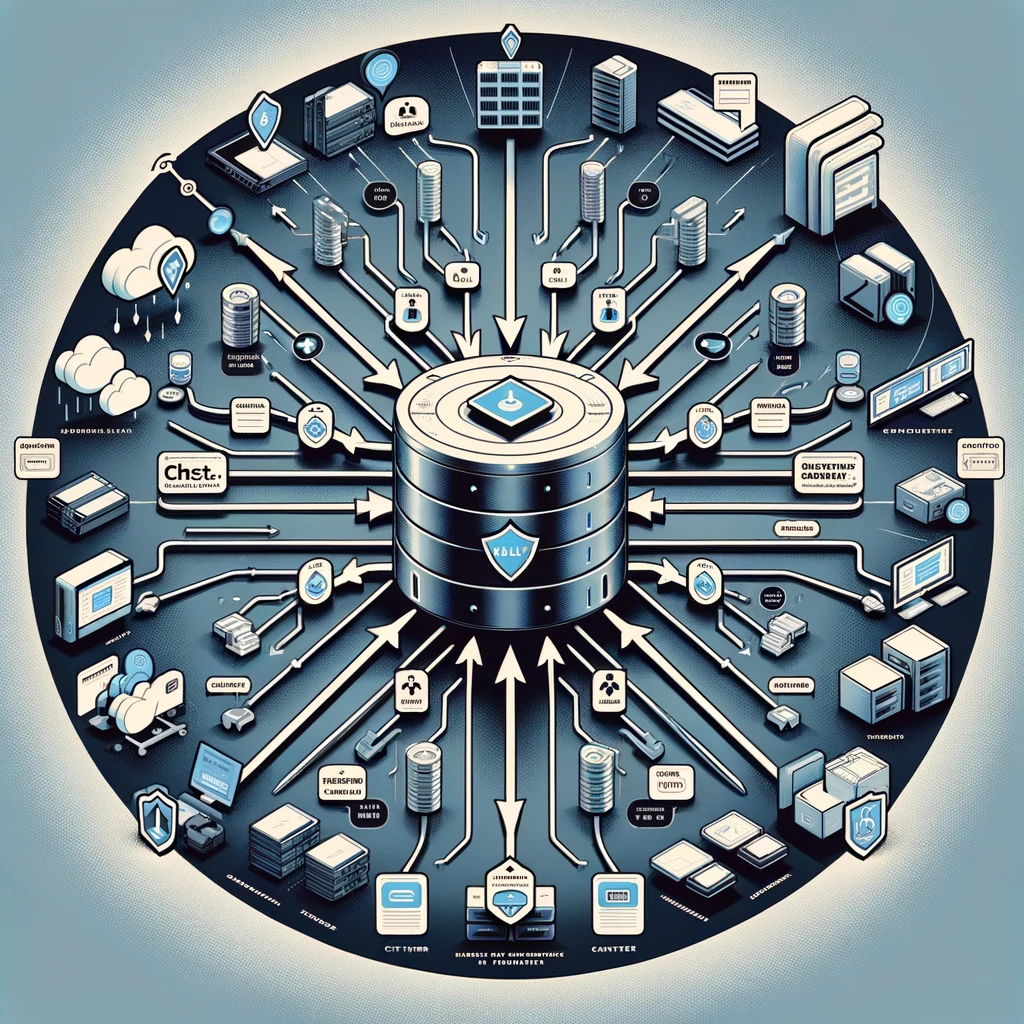

From Fragmentation to Unity: The Evolution of Data Analytics

Microsoft Fabric represents a paradigm shift in data analytics, moving from a fragmented landscape of individual components to a unified, integrated stack. It transforms the approach from relying on a single database to harnessing the power of all available data. Most importantly, it evolves from merely incorporating AI as an add-on to embedding generative AI (Gen AI) into the very fabric of the platform.

The Four Core Design Principles of Microsoft Fabric

- Complete Analytics Platform: Microsoft Fabric offers a comprehensive solution that is unified, SaaS-fied, secured, and governed, ensuring that all your data analytics needs are met in one place.

- Lake Centric and Open: At the heart of Fabric is the concept of "One Lake, One Copy," emphasizing a single data lake that is open at every tier, ensuring flexibility and openness.

- Empower Every Business User: The platform is designed to be familiar and intuitive, integrated seamlessly into Microsoft 365, enabling users to turn insights into action effortlessly.

- AI Powered: Fabric is turbocharged with AI, from Copilot acceleration to generative AI on your data, providing AI-driven insights to inform decision-making.

The Transition from Synapse to SaaS-fied Fabric

Microsoft Fabric marks a significant evolution from separate products like Azure Data Factory (ADF) and Azure Cosmos DB to a unified, seamless experience. This transition embodies the shift towards a SaaS (Software as a Service) model, characterized by ease of use, cost efficiency, scalability, and accessibility.

OneLake: The OneDrive for Data

OneLake stands as the cornerstone of Microsoft Fabric, offering a single SaaS lake for the entire organization. It is automatically provisioned with the tenant, and all workloads store their data in intuitive workspace folders. OneLake ensures that data is organized, indexed, and ready for discovery, sharing, governance, and compliance, with Delta - parquet as the standard format for all tabular data.

Tailored Experiences for Different Personas

Microsoft Fabric caters to various personas, including data engineers, scientists, analysts, citizens, and stewards, providing optimized experiences for each. From executing tasks faster to making more data-driven decisions, Fabric empowers users across the board.

Copilot: AI Assistance for All

Copilot is a standout feature of Microsoft Fabric, offering AI assistance to enrich, model, analyze, and explore data in notebooks. It helps users understand their data better, create and configure ML models through conversation, write code faster with inline suggestions, and summarize and explain code for enhanced understanding.

Adhering to Design Principles

Microsoft Fabric adheres to key design principles, ensuring a unified SaaS data lake without silos, true data mesh as a service with OneLake, no lock-in with industry-standard APIs and open file formats, and comprehensive security and governance.

In conclusion, Microsoft Fabric is a transformative solution that simplifies and unifies data analytics in the era of AI. With its core design principles, it empowers business users, leverages AI power, and offers a seamless, SaaS-fied experience, making it an essential tool for any organization looking to harness the full potential of their data.